vibecheck: auditing ai code against the plan

part of my ai coding workflow. this is the final checkpoint before committing. did we actually build what we planned?

the problem

ai assistants are eager to help. too eager. you ask for a feature, they build the feature, then add "helpful" extras:

- "i also refactored the adjacent module for consistency"

- "added comprehensive error handling throughout"

- "created a utility function we might need later"

none of that was in the plan. it's gold-plating. and it creates:

- untested code paths

- unexpected behavior changes

- scope creep that compounds

- commits that don't match their message

the test-first enforcement and plan critique happen BEFORE implementation. but what about AFTER? how do you verify the implementation matches the plan?

the solution

a slash command called /vibe-check that audits what was built against what was planned.

it's not a hook. it's a manual checkpoint i run before committing. the name is deliberately casual because it's a sanity check, not a gate.

how it works

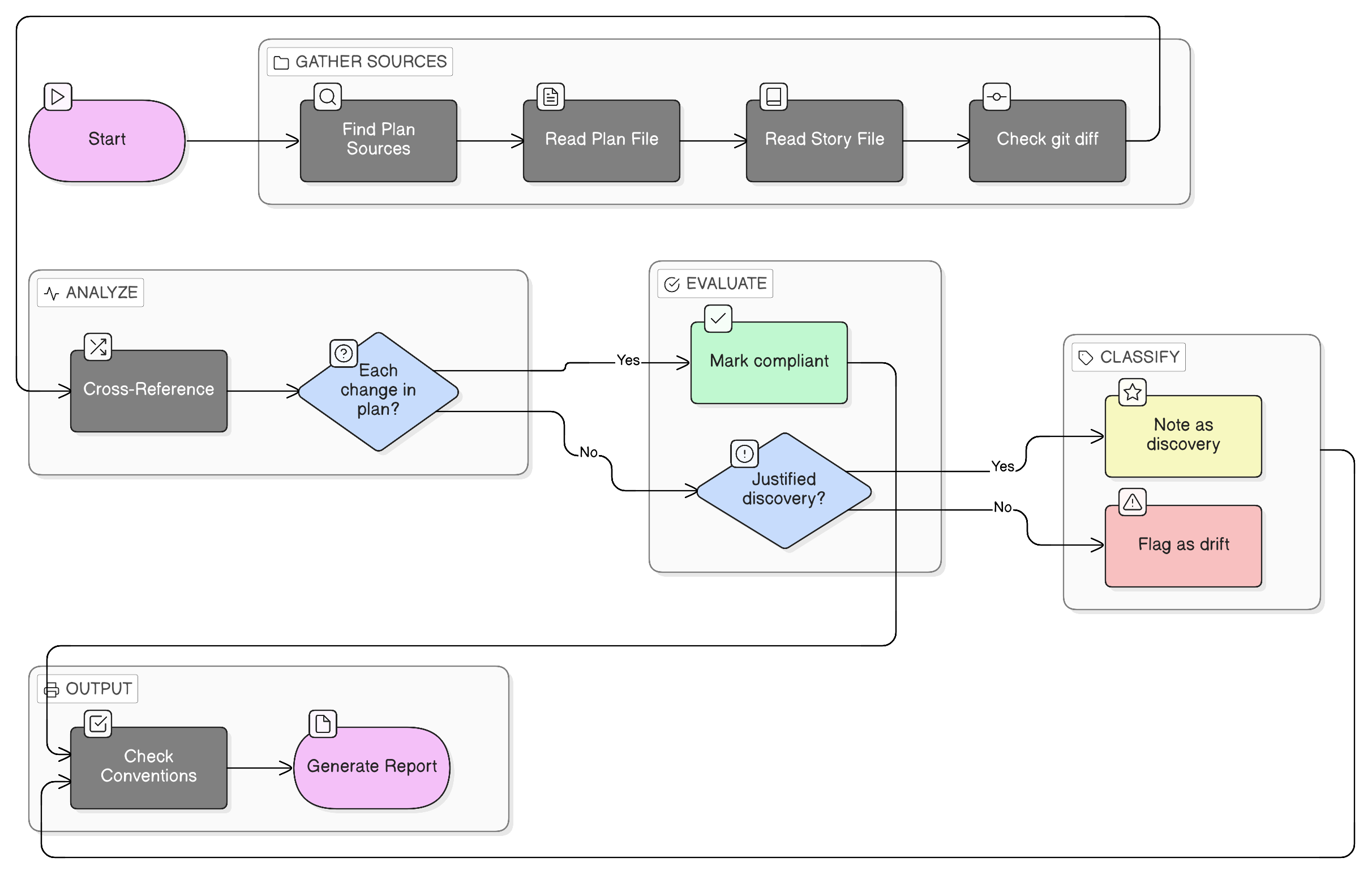

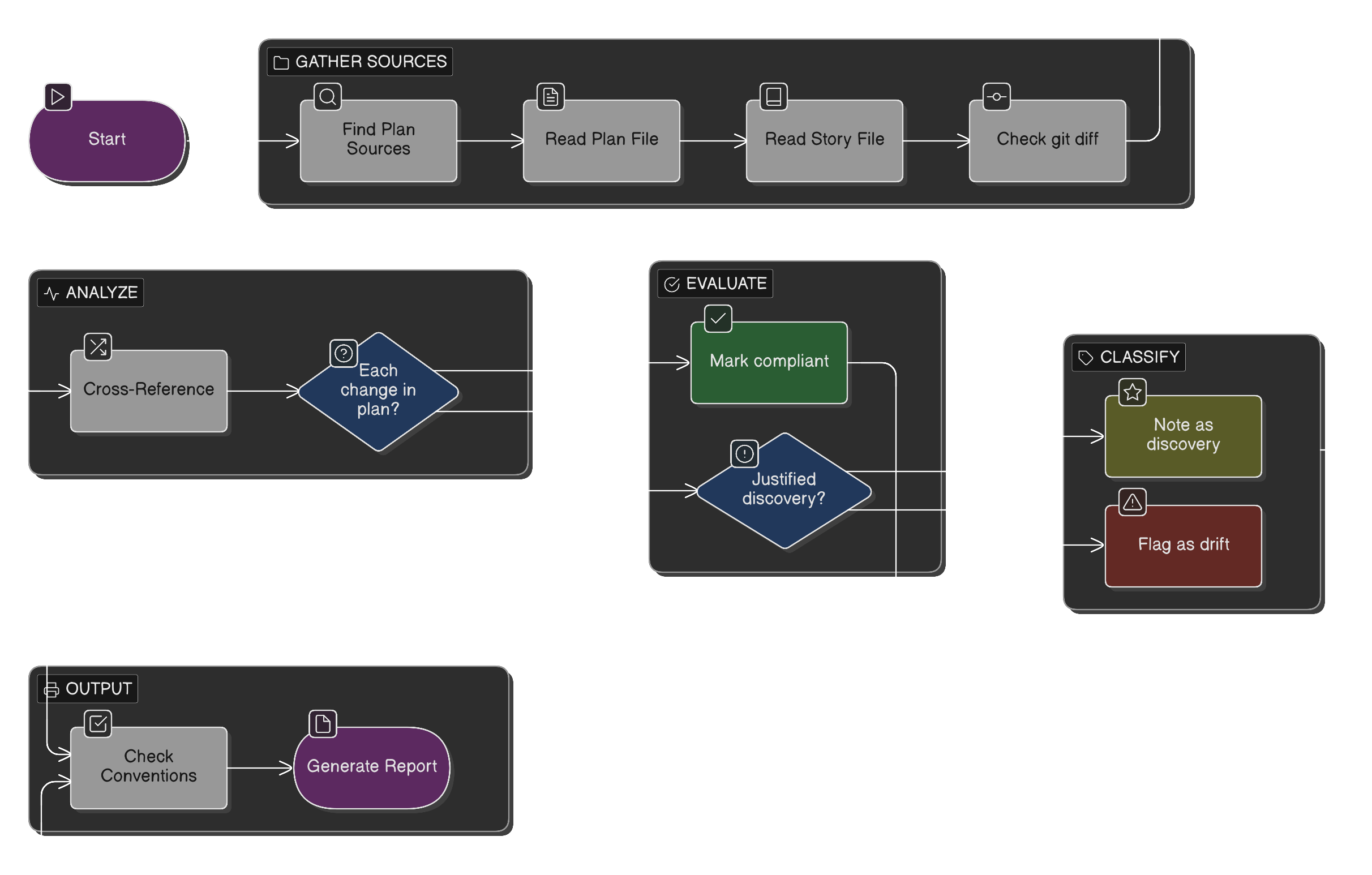

the command lives in .claude/commands/vibe-check.md and instructs claude to:

step 1: find the plan sources

the command tells claude where to look:

**A. Claude Plan File (primary)**

# get the most recent plan file

ls -t ~/.claude/plans/*.md | head -1

**B. Story File (if referenced)**

- story files live at your docs folder

- pattern: docs/stories/3.8-*.md

**C. ADR (if architectural work)**

- check docs/decisions/*.md for relevant ADRs

step 2: read and compare

# most recent claude plan

cat "$(ls -t ~/.claude/plans/*.md | head -1)"

# what changed this session

git diff --name-only HEAD~5 # recent commits

git diff --name-only # uncommitted

step 3: cross-reference

for each file in git diff:

- was it in the plan's "files to create" or "files to modify"?

- if not, is it a justified discovery or gold-plating?

step 4: check conventions

the command includes a checklist of project conventions from CLAUDE.md:

| Convention | Check |

|------------|-------|

| **Test-first** | tests written before implementation? |

| **Protocol-based** | new services implement protocols? |

| **Dependency injection** | services via deps.py? |

| **Idempotent** | check state before doing work? |

| **Type hints** | all functions typed? |

| **Graceful degradation** | optional services fail gracefully? |

| **No over-engineering** | only requested changes? |

step 5: generate report

the output is a structured audit:

## Vibe Check: 2026-01-27

### Sources

- **Plan:** happy-paper-salesman.md

- **Story:** 3.17-client-query-service.md

- **ADR:** DATABASE_SCHEMA.md

### Plan Adherence

| Plan Item | Status | Notes |

|-----------|--------|-------|

| Create src/app/models/client.py | ✓ | |

| Modify src/app/services/sales.py | ✓ | |

| Modify src/app/protocols.py | ✓ | |

| Add 13 tests | ✓ | |

### Unplanned Work

| Change | Justified? | Notes |

|--------|------------|-------|

| Updated models/__init__.py | ✓ | required for exports |

### Convention Compliance

| Convention | Status | Notes |

|------------|--------|-------|

| Test-first | ✓ | tests written first |

| Protocols | ✓ | added to SalesProvider |

| Types | ✓ | ClientType Literal |

| Graceful degradation | ✓ | returns [] on error |

### Verdict

**CLEAN**

real example

say i'm building a client lookup feature for dunder mifflin infinity. the plan was to implement get_regional_clients() with 13 specific tests.

after implementation, /vibe-check found:

plan adherence: all 4 files modified as planned, all 13 tests implemented.

unplanned work: one file (models/__init__.py) was modified but not in the plan. verdict: justified. the plan said "export ClientType for reuse" which implies updating the init file.

conventions: all passed. test-first followed, protocol updated, types complete, graceful degradation implemented.

verdict: CLEAN

no drift. no gold-plating. ready to commit.

the command file

here's the structure of the command (.claude/commands/vibe-check.md):

# Check

Audit whether we stayed the course. did we stick to the plan

and follow CLAUDE.md conventions?

## Instructions

### 1. Find the Plan Sources

Check these locations:

**A. Claude Plan File (primary)**

\`\`\`bash

ls -t ~/.claude/plans/*.md | head -1

\`\`\`

Read this file. it contains the implementation plan.

**B. Story File (if referenced)**

- scan conversation for story references like "Story 3.8"

- story files live at your docs/stories/ folder

**C. ADR (if architectural work)**

- check docs/decisions/*.md for relevant ADRs

### 2. Read the Sources

\`\`\`bash

cat "$(ls -t ~/.claude/plans/*.md | head -1)"

git diff --name-only HEAD~5

git diff --name-only

\`\`\`

### 3. Cross-Reference

From the plan file, check each section:

- "Files to Create". were they all created?

- "Files to Modify". were they all modified?

- implementation steps. completed in order?

For each change we made:

- was it in the plan? (Y/N)

- if not, justified discovery or gold-plating?

### 4. Check CLAUDE.md Conventions

| Convention | Check |

|------------|-------|

| **Test-first** | tests written before implementation? |

| **Protocol-based** | new services implement protocols? |

| **Dependency injection** | services via deps.py? |

| **Type hints** | all functions typed? |

| **Graceful degradation** | optional services fail gracefully? |

| **No over-engineering** | only requested changes? |

### 5. Report

[structured output template]

### Verdict

**[CLEAN / MINOR DRIFT / OFF TRACK]**

be honest. catching drift early saves debugging later.

when drift happens

not every vibecheck is clean. when there's drift:

MINOR DRIFT. small additions that weren't planned but are reasonable:

- added a log statement

- fixed an unrelated typo

- updated a comment

OFF TRACK. significant unplanned work:

- refactored the

SalesRepmodule "while i was in there" - added regional filtering not in the story

- changed behavior of existing

Clientqueries

for minor drift, i usually let it slide. for off track, i either:

- revert the unplanned changes

- create a separate commit/PR for them

- update the plan retroactively (if it was genuinely needed)

why manual, not automated

unlike the plan critique hook, vibecheck is manual. reasons:

- context matters. some drift is fine, some isn't. judgment required.

- not every session needs it. quick fixes don't need audits.

- it's a conversation. sometimes i want to discuss the drift with claude.

the goal isn't bureaucracy. it's catching problems before they compound.

the workflow

Plan → Critique Hook → Implement → /vibe-check → Commit

the critique hook blocks bad plans. the vibecheck catches drift from good plans.

together they create a closed loop: what you planned is what you built.

related

- my ai coding workflow. the full system

- multi-llm plan critique. pre-implementation validation

- test-first enforcement. ensuring tests are in the plan

staying on course is harder than starting on course. the vibecheck is how i verify we got there.