the system

Version history

- v2.1Added thoughts/ directory structure and automation table

- v2Added council planning, hooks automation for handoffs

- v1Original workflow overview

i've mass-produced slop. watched context windows fill with garbage. let ai drift into gold-plating while i nodded along. shipped a product that was fragile and slow because the codebase was already polluted by the time i knew better.

this system is how i stopped.

the problem

when you're coding with an llm, the failure modes are subtle:

- drift. you ask for X, you get X plus a bunch of "improvements" you didn't ask for

- overconfidence. the model commits to an approach without considering alternatives

- no accountability. there's no record of what you agreed to build vs what got built

- blind spots. one model might miss something obvious that another would catch

i wanted a system that catches these before i'm 500 lines deep into the wrong solution.

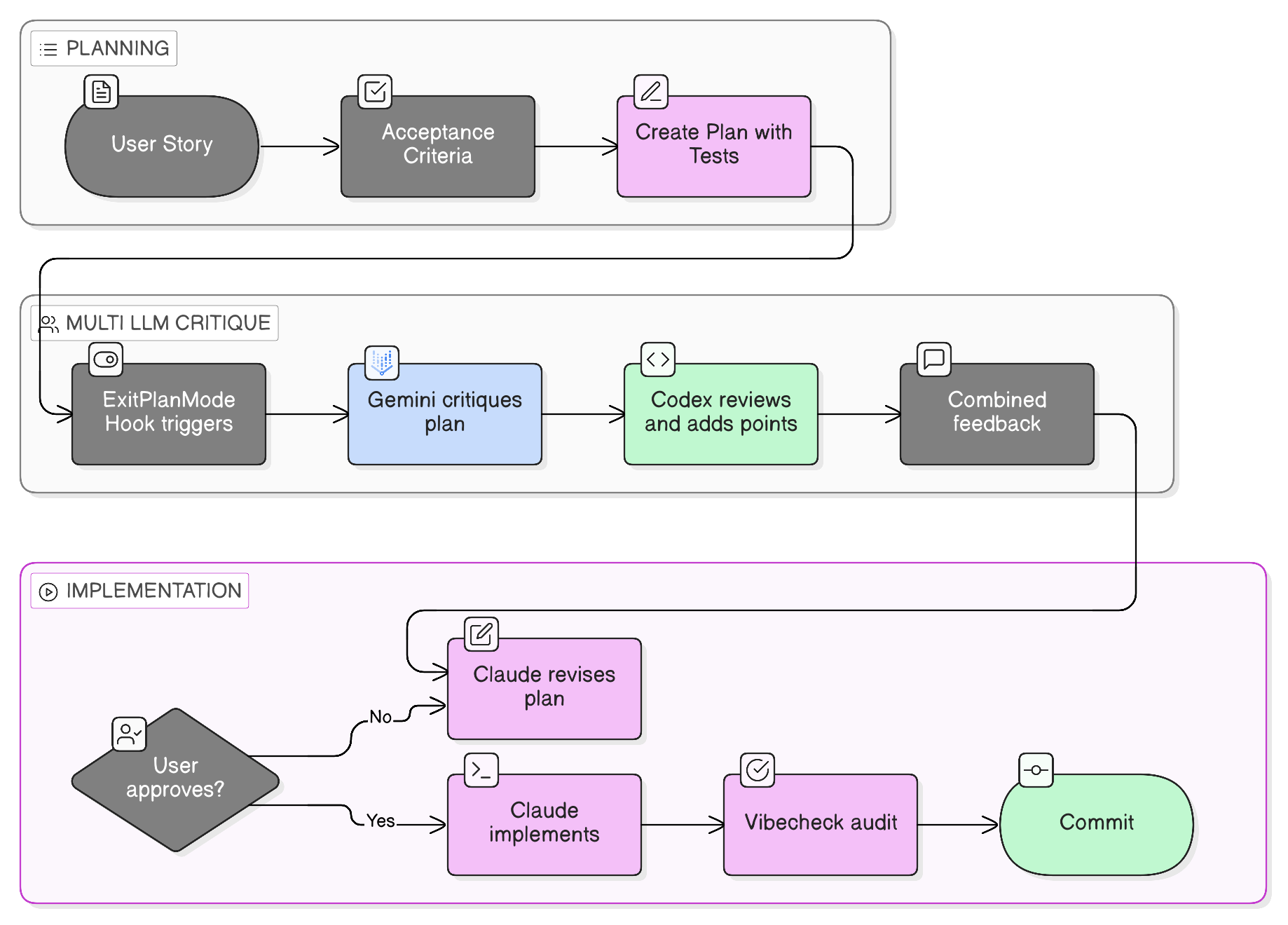

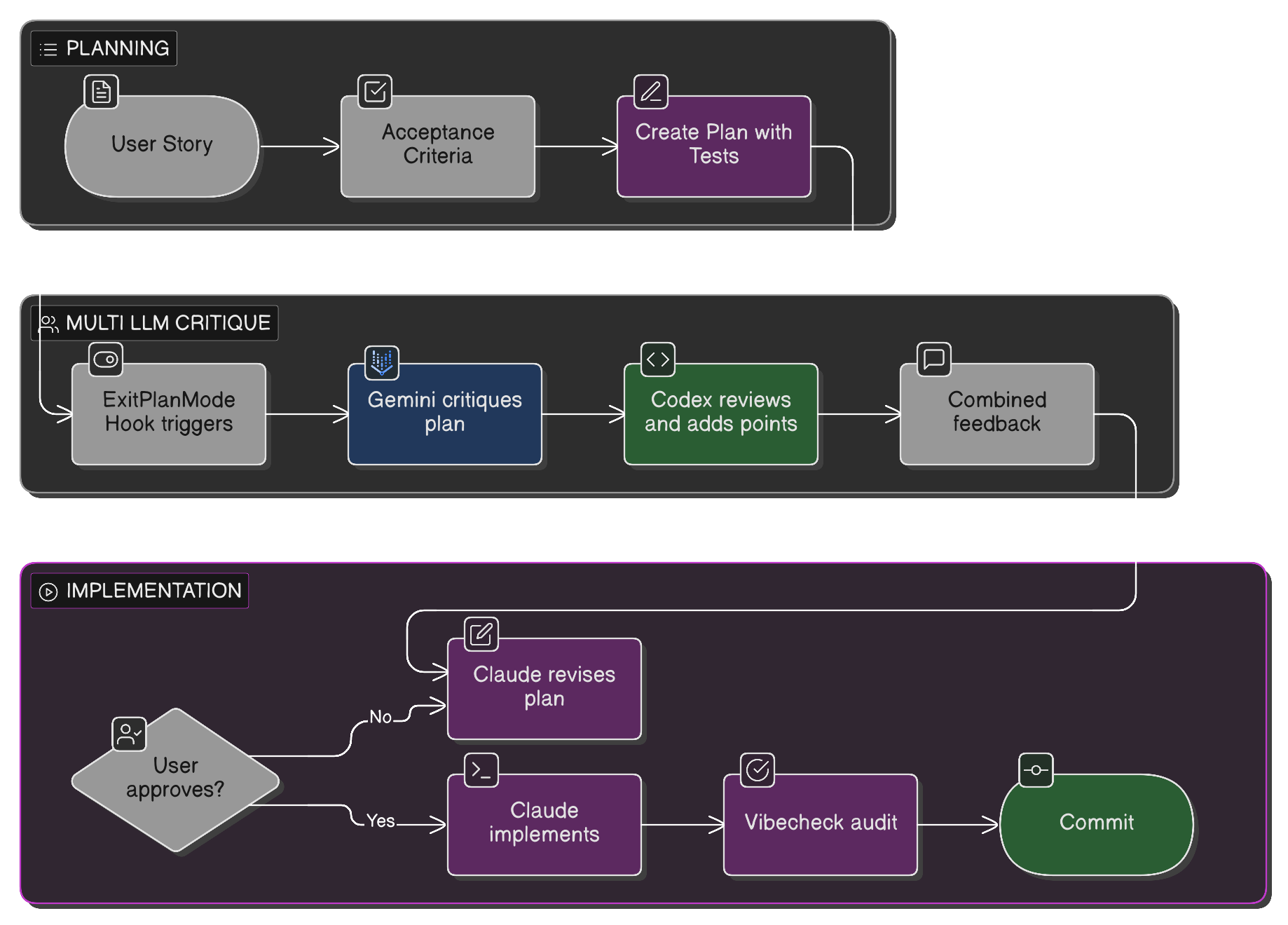

the workflow

let me break this down.

1. start with a user story

not because i'm doing capital-A Agile, but because it forces me to articulate what i actually want before touching code. something like:

As a user viewing a collection, I want to see who created it so I can understand its context.

simple. one sentence. if i can't write this, i don't understand the feature yet.

2. acceptance criteria

what does "done" look like? i write these before any planning using context-behavior-constraint format:

- creator name appears below collection title

- links to creator's profile

- shows "Anonymous" if no creator set

- works on mobile

these become the checklist at the end. the CBC format makes them directly testable. each behavior maps to a test.

3. plan with tests first

here's where it gets interesting. when claude creates a plan, i've set up a hook that enforces test-first development. the plan literally cannot proceed unless it lists test files before implementation files.

this matters because:

- tests document expected behavior

- forces thinking about edge cases early

- creates accountability for what we're building

4. multi-llm critique

when i approve a plan in claude code, a PostToolUse hook fires. it:

- checks the plan has tests (blocks if not)

- sends the plan to gemini 3 flash for architectural critique

- sends both the plan AND gemini's critique to codex for a second opinion

- returns everything to claude

why multiple models? they have different blind spots. gemini might catch an architectural issue, codex might notice a missing edge case. i've seen them disagree. that's valuable signal.

update: i've since evolved this into council planning. instead of reviewing claude's finished plan, all three models explore the problem in parallel. when they converge, high confidence. when they diverge, you've found the real design decisions.

5. implement

now claude implements with full context:

- the original user story

- acceptance criteria

- a critique-hardened plan

- test files to write first

6. vibecheck

this is the final gate. after implementation, i run /vibecheck which:

- rereads the original plan

- looks at what files changed

- checks: did we stay on course?

if we drifted, it flags it. no silent scope creep.

why this works

the key insight is that ai coding isn't about prompting, it's about constraints.

you need:

- explicit goals (user stories, acceptance criteria)

- enforceable standards (test-first hooks)

- multiple perspectives (multi-llm critique)

- verification (vibecheck)

without these, you're just hoping the ai does what you want.

the tools

- claude code (opus 4.5). primary coding assistant

- opencode + gemini 3. architectural critique via openrouter

- codex cli. second opinion on plans

- claude code hooks. the glue that enforces all this

the directory structure

all persistent context lives in thoughts/:

thoughts/

├── CURRENT.md # Symlink to active handoff (auto-loaded on start)

├── handoffs/ # Timestamped session handoffs (via /handoff)

├── plans/ # Implementation plans (save after council synthesis)

├── research/ # Raw council perspectives (auto-saved)

└── scratch/ # Temporary files (gitignored)

| Folder | Populated By | Automatic? |

|---|---|---|

research/ | collect-council.sh hook | yes |

plans/ | Claude after synthesis | prompted |

handoffs/ | /handoff command | manual |

scratch/ | Council hooks | auto-cleaned |

CURRENT.md | /handoff command | auto-linked |

the key insight: research/ is fully automatic. plans/ requires claude to save after synthesizing (the hook prompts this). handoffs/ requires you to run /handoff.

what's next

i'm writing deeper dives on each piece:

- how i got here. the journey through different ai coding tools

- context-behavior-constraint. acceptance criteria that map to tests

- multi-llm plan critique. the hook system in detail

- test-first enforcement. how to block plans without tests

- vibecheck. staying on course

- handoff. deliberate context reset with hooks automation

this workflow isn't perfect and i'm still iterating. but it's way better than "just prompt it and pray."

if you're building something similar or have ideas, i'm @kevinmanase on twitter.